- Services

- Data Analysis services

- Sample Work

Data Analysis services

- Secondary Qualitative Research Services

- Secondary Quantitative Research Services

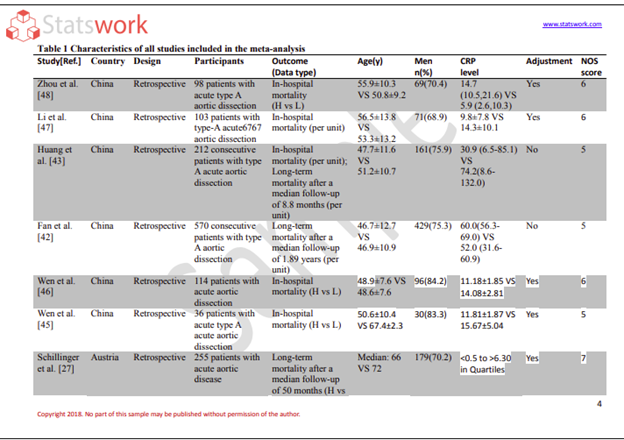

- Meta-Analysis Research services

- Sample Work

Meta-Analysis Research Services

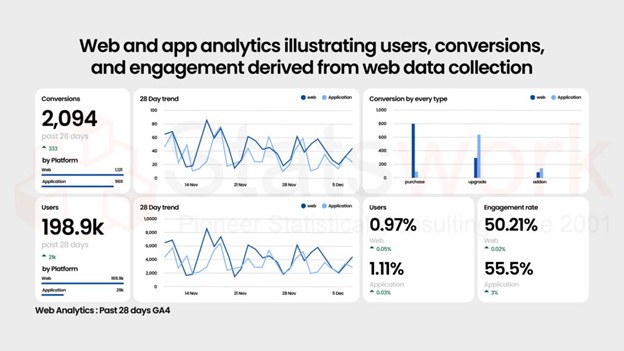

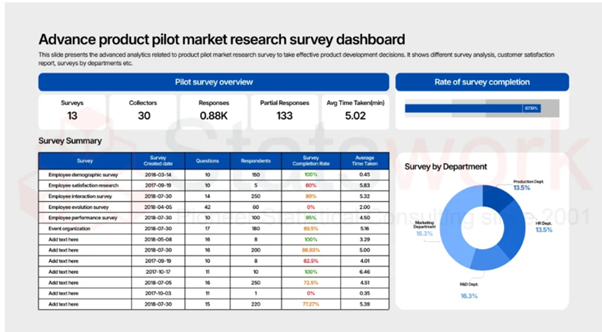

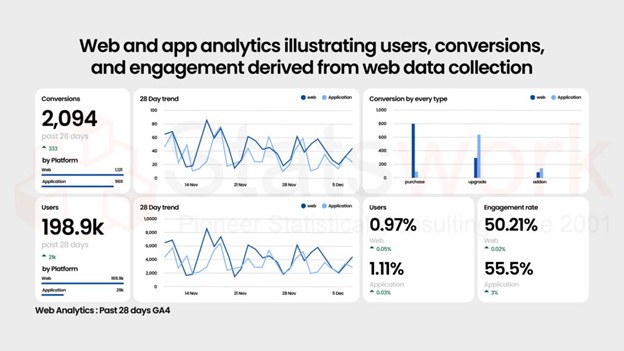

- Data Collection Services

- Sample Work

Data Collection Services

- Statistical & Biostatistics services

- Sample Work

Statistical Programming & Biostatistics services

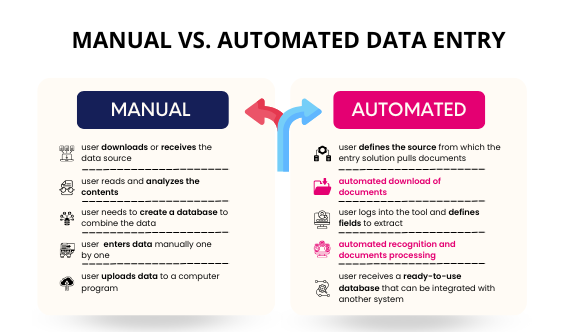

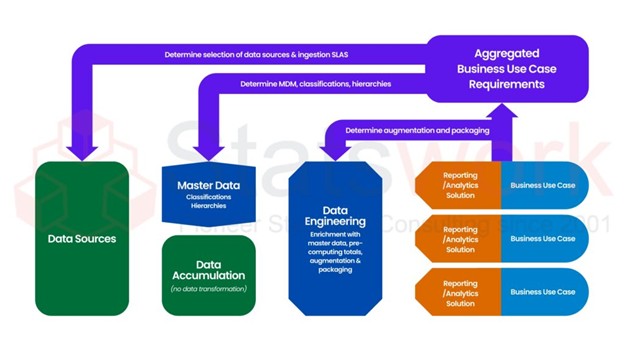

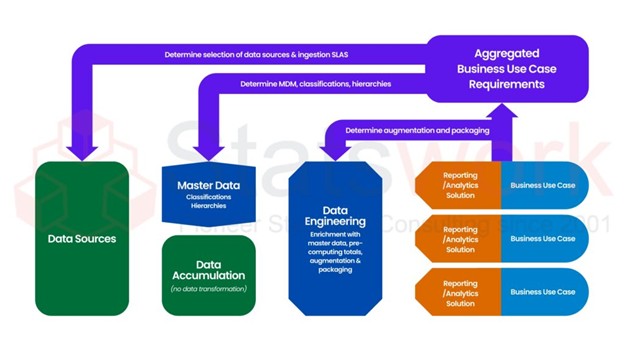

- Data Management Services

- Sample Work

Data Management Services

- Research methodology services

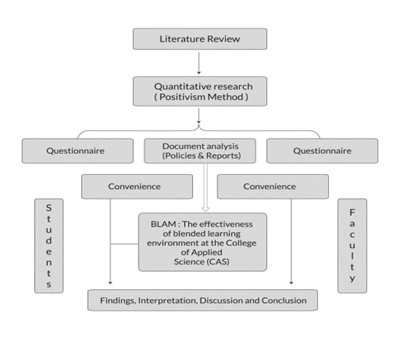

- Sample Work

Research methodology services

- Tool Development Services

- Sample Work

Tool development services

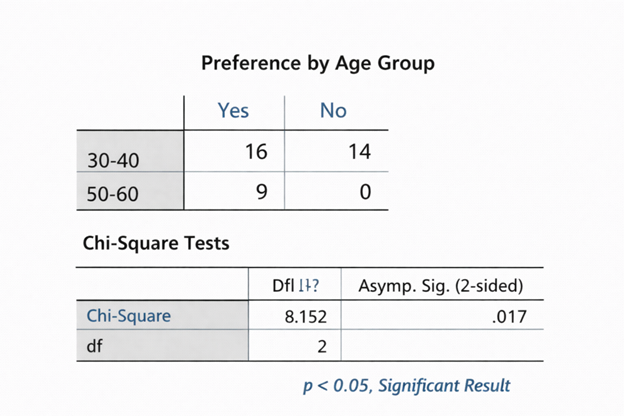

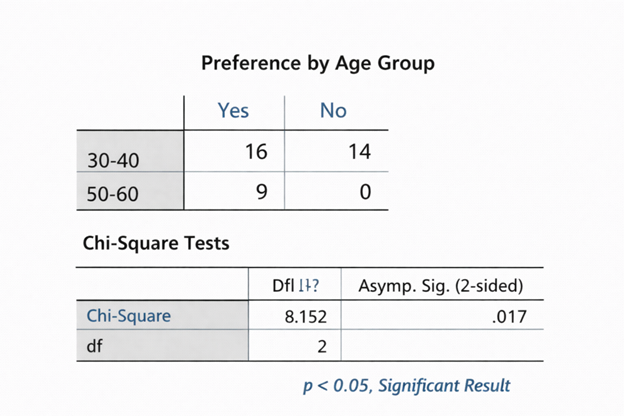

- Statistical Interpretation services

-

Statistical Interpretation services

- Sample Work

Statistical Interpretation services

-

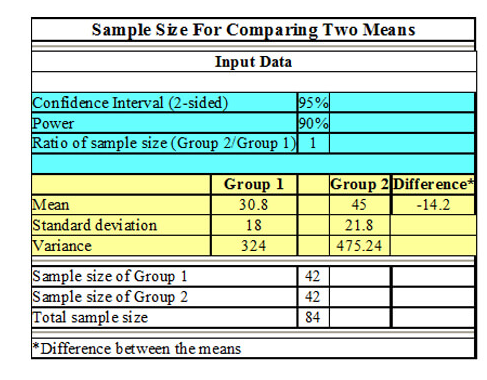

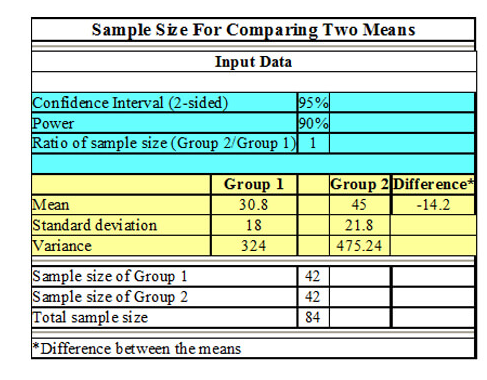

- Sample Size Calculation Services

-

Sample Size Calculation Services

- Sample Work

Sample Size Calculation Services

-

- AI & ML Services

-

Artificial Intelligence and Machine Learning Services

- Sample Work

Artificial Intelligence and Machine Learning Services

-

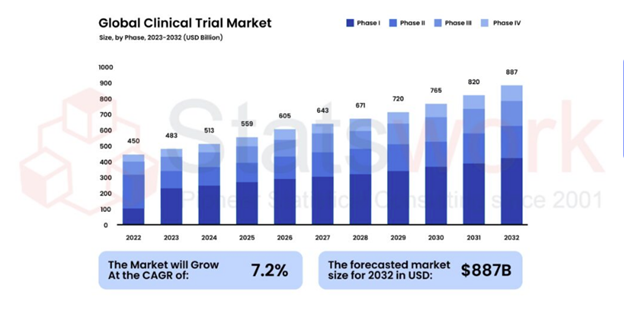

- Meaningful Visualization Services

- Thought Leadership Services

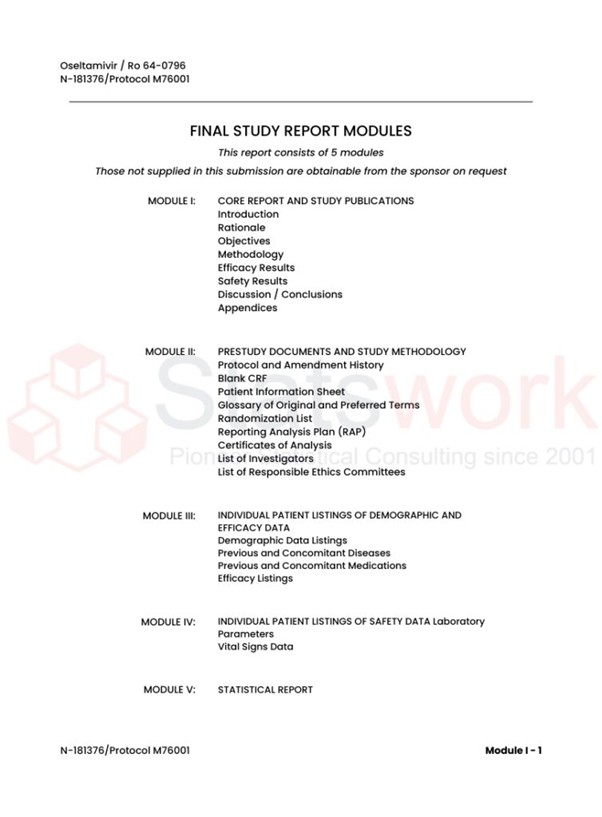

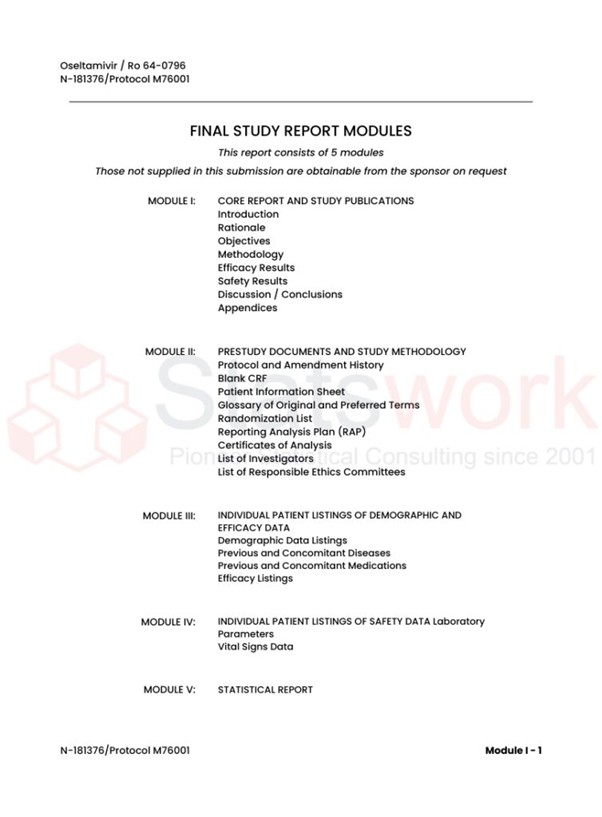

- Report Generation Services

-

Report generation Service

- Sample Work

Report generation Services

-

- Data Analysis services

- Industries

- About Us

- Insights

- Blog

- Contact Us

- Services

- Data Analysis services

- Sample Work

Data Analysis services

- Secondary Qualitative Research Services

- Secondary Quantitative Research Services

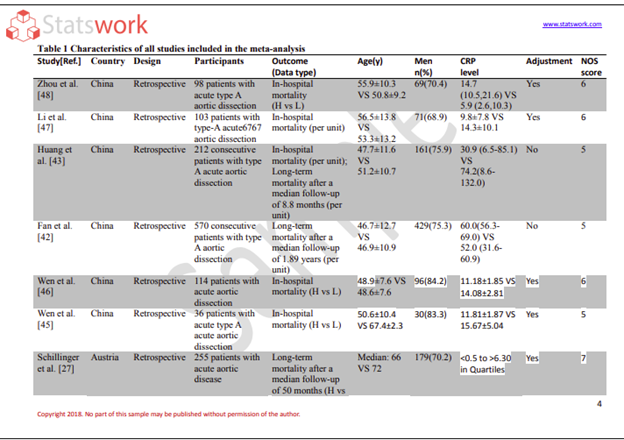

- Meta-Analysis Research services

- Sample Work

Meta-Analysis Research Services

- Data Collection Services

- Sample Work

Data Collection Services

- Statistical & Biostatistics services

- Sample Work

Statistical Programming & Biostatistics services

- Data Management Services

- Sample Work

Data Management Services

- Research methodology services

- Sample Work

Research methodology services

- Tool Development Services

- Sample Work

Tool development services

- Statistical Interpretation services

-

Statistical Interpretation services

- Sample Work

Statistical Interpretation services

-

- Sample Size Calculation Services

-

Sample Size Calculation Services

- Sample Work

Sample Size Calculation Services

-

- AI & ML Services

-

Artificial Intelligence and Machine Learning Services

- Sample Work

Artificial Intelligence and Machine Learning Services

-

- Meaningful Visualization Services

- Thought Leadership Services

- Report Generation Services

-

Report generation Service

- Sample Work

Report generation Services

-

- Data Analysis services

- Industries

- About Us

- Insights

- Blog

- Contact Us

How to Verify Accuracy of Secondary Data for Research

- Home

- Blog

- How to Verify Accuracy of Secondary Data for Research

Meta Analysis Service

- What is Secondary Data?

- Why Secondary Data Accuracy Matters

- Key Methods to Verify Secondary Data Accuracy

- Special Focus: Healthcare Research

- Common Challenges in Verifying Secondary Data

- Best Practices for Ensuring Data Reliability

- Tools for Data Verification and Validation

- Conclusion

- Frequently Asked Questions (FAQs)

Recommended Reads

Contact us

How to Verify Accuracy of Secondary Data for Research

Secondary data is very important in today’s research, as it gives us the opportunity to access a large volume of data without the need to collect primary data. But it is very important to ensure the accuracy of the secondary data before using it for analysis or decision-making. Inaccurate data may lead us to false conclusions, false insights, and false results [1].

This blog will explain how we can ensure the accuracy of the secondary data, which includes some techniques, strategies, and best practices.

What is Secondary Data?

Secondary data involves information that has been collected, processed, and published by other researchers, organizations, and institutions.

The sources of secondary data include:

- Reports from governments and censuses carried out by various governments across the world

- Journals and publications from academic sources

- Public sources of data and open data sources

- Healthcare sources of data [2]

Secondary data involves cost and time benefits. However, it also involves data verification.

Why Secondary Data Accuracy Matters

The quality of the research can also be affected by the inaccuracy or outdated nature of the data. The importance of ensuring the reliability of the data lies in the following:

- Making valid conclusions in the research

- Making evidence-based decisions

- Avoiding bias and inconsistency in the research

- Ensuring credibility in academic and business research [3]

The importance of the reliability of the data is more in the case of healthcare, as the decisions in the field have a direct impact.

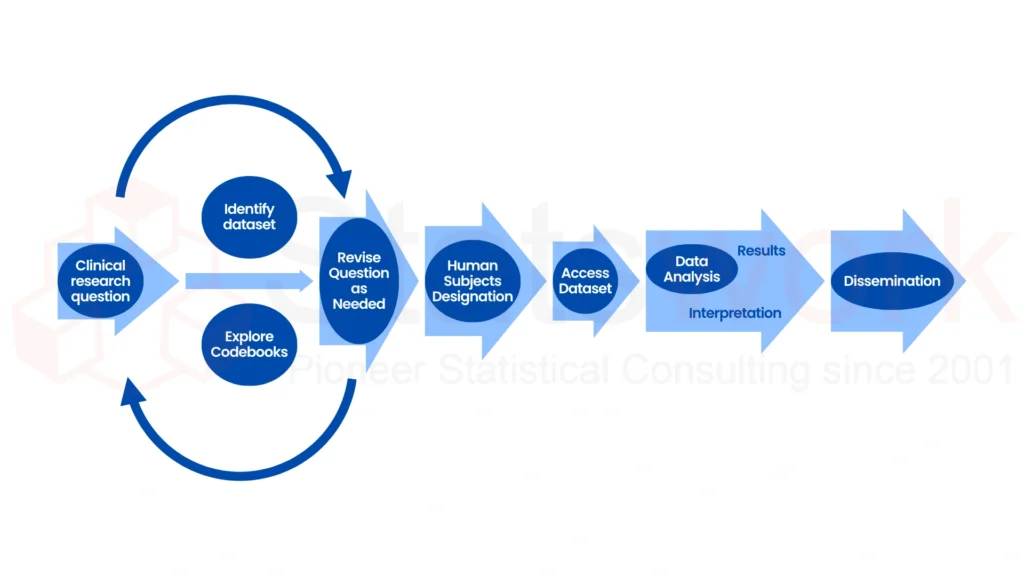

Figure 1: Secondary Data Research Workflow and Validation Process

Key Methods to Verify Secondary Data Accuracy

1. Source Evaluation

The first step in evaluating the secondary data is to check the credibility of the data source.

To check the data source for its credibility, look for:

- Reputable data sources (government, universities, international institutions)

- Peer-reviewed articles [4]

- Transparency of data collection process

Reliable data sources help build more trust with the secondary data and reduce the chances of misinformation.

2. Cross-Verification of Data from Multiple Sources

One of the best ways of validating the data is by cross-verifying it from multiple sources.

To do this effectively, look for consistency of data from one source to another and identify any discrepancies. Also, use multiple sources of publicly available data for verification [5].

This process of validation of data is one of the best ways of validating data and ensures that the data being researched is not from a single flawed data source.

3. Data Collection Methodology

Understanding the data collection process of the data being researched is essential for validating the reliability of the data.

To do this effectively, look for:

- Data collection tools and instruments

- Timeframe of data collection

- Potential biases of the data collection process [5]

This process helps identify whether the data being researched is aligned with the needs of the data being researched.

Figure 2: Common Sources of Secondary Data for Research

4. Check for Timeliness and Relevance

Outdated data can lead to inaccurate conclusions.

- The data needs to be recent and relevant.

- The publication date needs to be checked.

- The data also needs to be aligned with the current research trends.

This needs to be done especially for fields where the data keeps changing, such as healthcare or technology [3].

5. Data Cleaning and Preprocessing

Data cleaning is an important part of the process for verifying the accuracy of the data.

- The steps involved in the data cleaning process include the following:

- Duplicate records need to be removed.

- There needs to be handling for missing values.

- Outliers also need to be identified.

- Standardizing the format also needs to be done [2].

This ensures the reliability of the data, especially for accurate analysis.

6. Statistical Validation Techniques

Using statistical methods also aids in the validation process, especially for secondary data.

- Descriptive statistics can be used for analysis.

- Outlier detection methods can also be applied.

- Correlation analysis can be done for logical relationships [4].

7. Consistency and Logic Checks

Check whether the data is logically correct or not.

- Check for impossible values (for instance, negative age)

- Check for internal consistency between variables

- Check for the relationship between data points

Logical checks are important for determining the best way to check the accuracy of secondary data effectively.

8. Metadata and Documentation Review

Metadata is information that provides context to the data set.

- Check definitions of variables used in the data set

- Check the units of measurement used in the data set

- Check the limitations of the data and assumptions made

Proper documentation is required for research validation to avoid incorrect interpretations [5].

Special Focus: Healthcare Research

The importance of ensuring the accuracy of secondary data in the healthcare sector lies in its direct impact on the outcomes of patients and decisions in the field.

The methods to ensure the verification of secondary data in the field of healthcare include:

- Cross-verification of the data with clinical databases and literature

- Verification of the data in accordance with medical standards [4]

- Ensuring the data is in accordance with ethical and regulatory guidelines

- Checking the data collection procedures of the healthcare systems

The importance of accurate data in the field of healthcare lies in the fact that it ensures safe decision-making.

Common Challenges in Verifying Secondary Data

The challenges researchers may encounter include:

- Incomplete data

- Lack of transparency of data sources

- Inconsistent data formats

- Bias in data collection

To overcome these challenges, data verification, cleaning, and validation are used.

Best Practices for Ensuring Data Reliability

To ensure data reliability and accuracy, one should:

- Always use credible and verified data sources

- Use multiple validation methods

- Document your verification process

- Use statistical tools for further analysis

- Update your datasets [3]

These practices are useful for ensuring data reliability for secondary data sets, hence improving overall research quality.

Tools for Data Verification and Validation

Some tools that may be used for validating secondary datasets include:

- Excel / Google Sheets – Basic cleaning and validation of datasets

- R / Python – Advanced statistical analysis and cleaning of datasets

- SPSS / SAS – Structured validation of datasets

- OpenRefine – Data cleaning

The tools are helpful in enhancing the efficiency of the verification process

Conclusion

The verification of the accuracy of secondary data is an important step in any research methodology. Through the application of a structured approach to data verification techniques, data cleaning, and statistical verification techniques, it is possible to ensure a successful outcome for any research methodology [5].

Knowledge of how to verify the accuracy of secondary data is important for effective research methodology and decision-making. Whether it is public domain data, health-related data, or any other kind of secondary data, a structured approach to verifying secondary data is important for a successful research methodology.

Transform your secondary data into reliable, research-ready insights. Connect with Statswork for expert validation and precision-driven research support.

Frequently Asked Questions (FAQs)

1. How to verify accuracy of secondary data?

- Evaluate source credibility

- Cross-check with multiple datasets

- Perform data cleaning and validation

- Use statistical analysis

2. What is secondary data accuracy?

- Refers to the correctness and reliability of existing datasets

- Ensure data is suitable for research use

- Prevents errors in analysis

3. Why is data verification important in research?

- Ensures reliable results

- Reduces bias and errors

- Improves research credibility

4. How to validate secondary datasets effectively?

- Review methodology and metadata

- Conduct consistency checks

- Apply statistical validation techniques

5. How does Statswork ensure secondary data accuracy?

- Uses advanced data verification techniques

- Performs thorough data cleaning and preprocessing

- Apply statistical validation methods for accuracy

- Cross-verifies data using multiple reliable sources

- Ensures alignment with research objectives

6. Can Statswork help in validating secondary datasets for research?

- Yes, provides end-to-end secondary data validation services

- Supports academic, healthcare, and business research

- Ensures data reliability and consistency

- Uses industry-standard tools like R, Python, and SPSS

- Delivers accurate, research-ready datasets

References:

- Emanuelson, U., & Egenvall, A. (2014). The data–Sources and validation. Preventive veterinary medicine, 113(3), 298-303. https://www.sciencedirect.com

- Sun, M., & Lipsitz, S. R. (2018, April). Comparative effective research methodology using secondary data: A starting user’s guide. In Urologic Oncology: Seminars and Original Investigations(Vol. 36, No. 4, pp. 174-182). Elsevier. https://www.sciencedirect.com

- Church, R. M. (2002). The effective use of secondary data. Learning and motivation, 33(1), 32-45. https://www.sciencedirect.com

- Rabianski, J. S. (2003). Primary and secondary data: Concepts, concerns, errors, and issues. The Appraisal Journal, 71(1), 43. https://www.proquest.com

- Windle, P. E. (2010). Secondary data analysis: is it useful and valid?. Journal of PeriAnesthesia Nursing, 25(5), 322-324. https://www.jopan.org