- Services

- Data Analysis services

- Sample Work

Data Analysis services

- Secondary Qualitative Research Services

- Secondary Quantitative Research Services

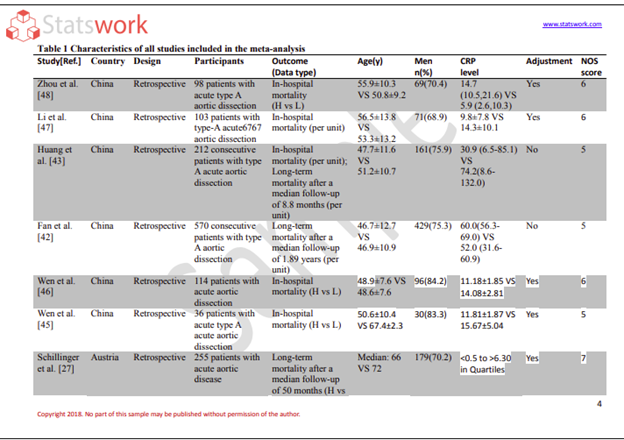

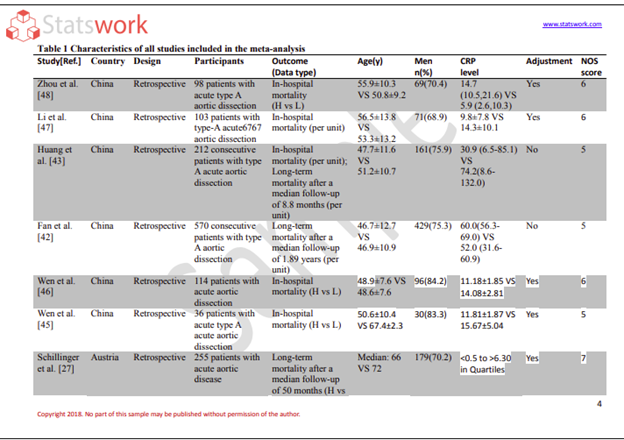

- Meta-Analysis Research services

- Sample Work

Meta-Analysis Research Services

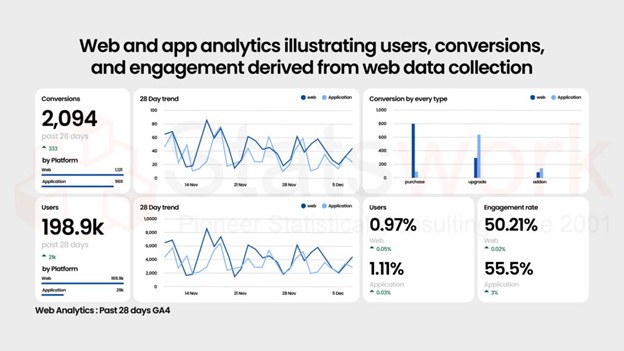

- Data Collection Services

- Sample Work

Data Collection Services

- Statistical & Biostatistics services

- Sample Work

Statistical Programming & Biostatistics services

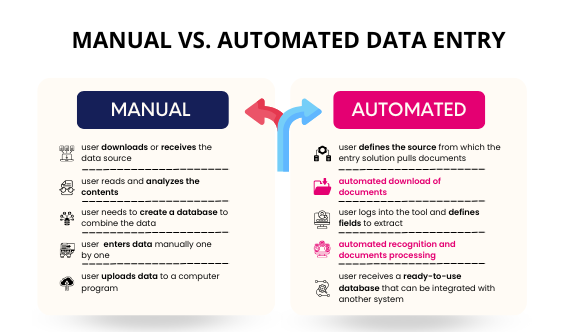

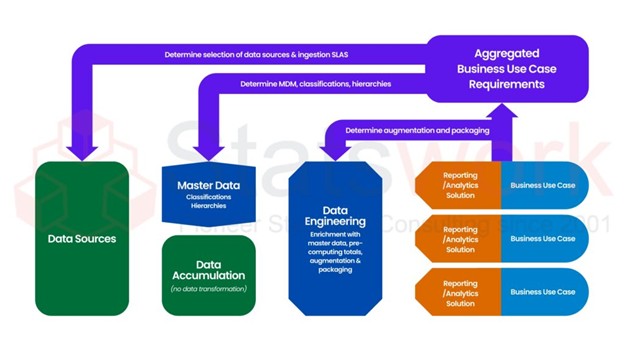

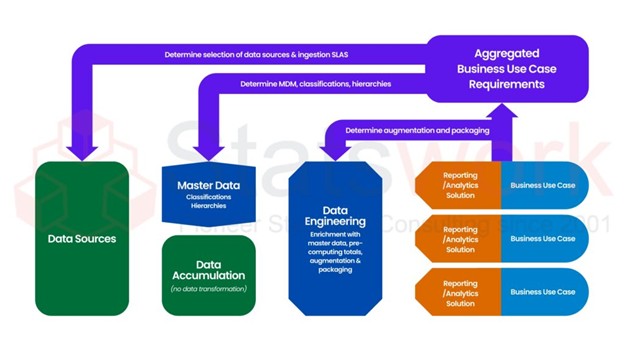

- Data Management Services

- Sample Work

Data Management Services

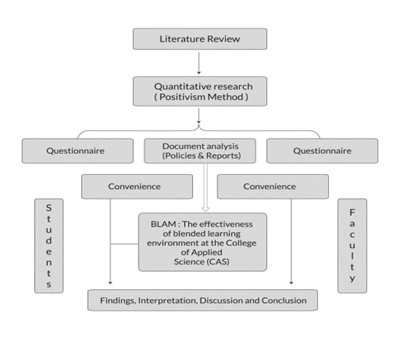

- Research methodology services

- Sample Work

Research methodology services

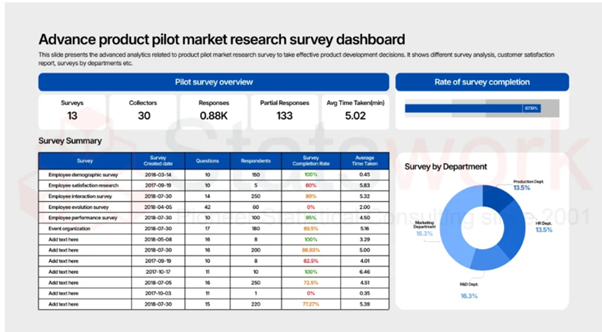

- Tool Development Services

- Sample Work

Tool development services

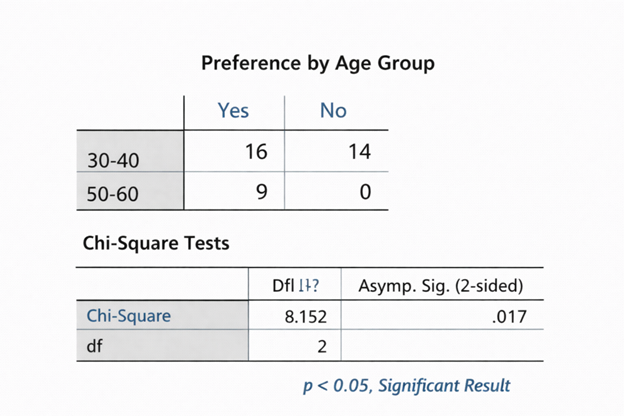

- Statistical Interpretation services

-

Statistical Interpretation services

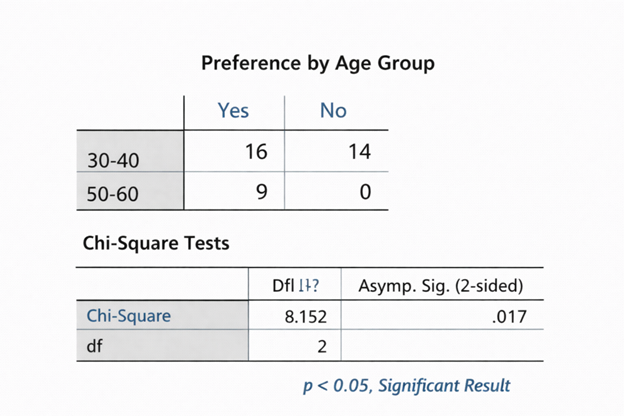

- Sample Work

Statistical Interpretation services

-

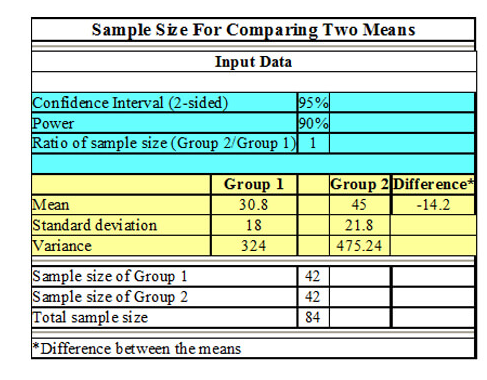

- Sample Size Calculation Services

-

Sample Size Calculation Services

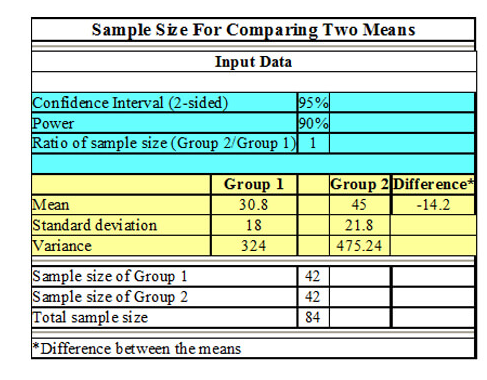

- Sample Work

Sample Size Calculation Services

-

- AI & ML Services

-

Artificial Intelligence and Machine Learning Services

- Sample Work

Artificial Intelligence and Machine Learning Services

-

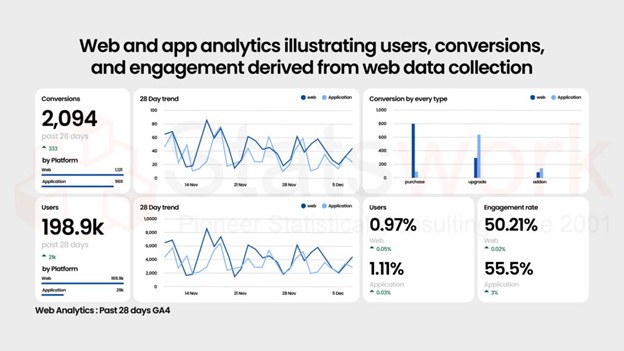

- Meaningful Visualization Services

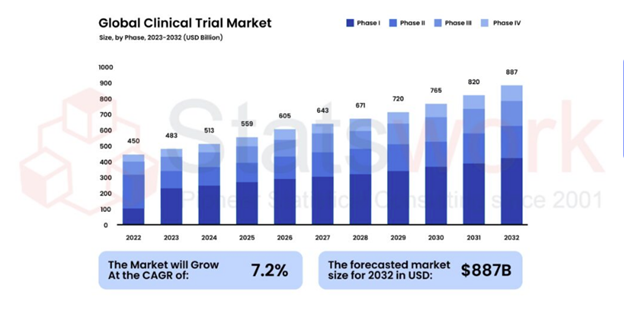

- Thought Leadership Services

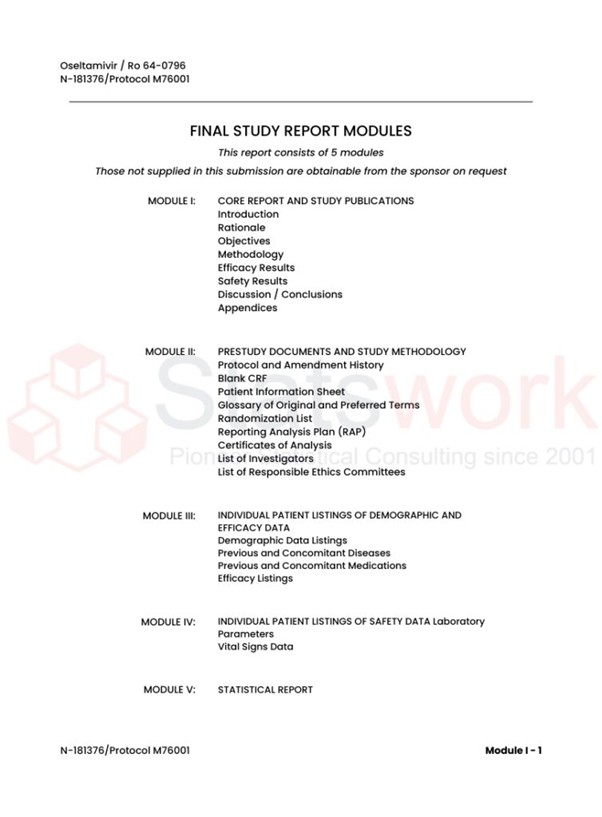

- Report Generation Services

-

Report generation Service

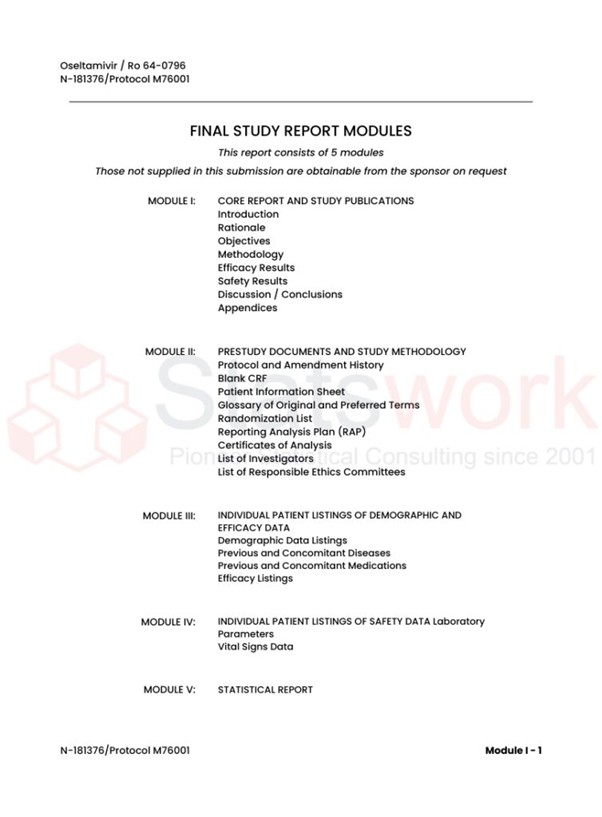

- Sample Work

Report generation Services

-

- Data Analysis services

- Industries

- About Us

- Insights

- Blog

- Contact Us

- Services

- Data Analysis services

- Sample Work

Data Analysis services

- Secondary Qualitative Research Services

- Secondary Quantitative Research Services

- Meta-Analysis Research services

- Sample Work

Meta-Analysis Research Services

- Data Collection Services

- Sample Work

Data Collection Services

- Statistical & Biostatistics services

- Sample Work

Statistical Programming & Biostatistics services

- Data Management Services

- Sample Work

Data Management Services

- Research methodology services

- Sample Work

Research methodology services

- Tool Development Services

- Sample Work

Tool development services

- Statistical Interpretation services

-

Statistical Interpretation services

- Sample Work

Statistical Interpretation services

-

- Sample Size Calculation Services

-

Sample Size Calculation Services

- Sample Work

Sample Size Calculation Services

-

- AI & ML Services

-

Artificial Intelligence and Machine Learning Services

- Sample Work

Artificial Intelligence and Machine Learning Services

-

- Meaningful Visualization Services

- Thought Leadership Services

- Report Generation Services

-

Report generation Service

- Sample Work

Report generation Services

-

- Data Analysis services

- Industries

- About Us

- Insights

- Blog

- Contact Us

Common Mistakes in Data Analysis and How Experts Avoid Them

- Home

- Blog

- Common Mistakes in Data Analysis and How Experts Avoid Them

Meta Analysis Service

- What is Data Analysis and Why Accuracy Matters

- What Are Common Mistakes in Data Analysis?

- Detailed Breakdown of Common Mistakes in Quantitative Data Analysis

- Tips to Avoid Mistakes in Data Analysis

- Step-by-Step Approach Experts Follow

- Tools Experts Use to Avoid Data Analysis Errors

- Challenges in Maintaining Research Accuracy

- Who Needs to Avoid These Mistakes?

Recommended Reads

Contact us

Common Mistakes in Data Analysis and How Experts Avoid Them

Data analysis is the foundation of informed decision-making in research and business. However, even the most experienced professionals can make common mistakes that affect the accuracy of research results and lead to incorrect conclusions. It is therefore crucial to understand the common mistakes made during data analysis by experts to ensure the accuracy of research results [1].

The guide below discusses the most common mistakes made during research data analysis, as well as the strategies used by experts to achieve precision, consistency, and accuracy in research results.

What is Data Analysis and Why Accuracy Matters

Analysis of data is performed to examine, clean, transform, and model data to uncover valuable insights. In academic and business environments, even minor statistical mistakes can cause serious problems, including.

- Misleading conclusions

- Poor decision-making

- Loss of credibility

- Waste of resources

Therefore, it is important to ensure data validation and accuracy to maintain the integrity of results [2].

What Are Common Mistakes in Data Analysis?

Below is a table summarizing the most frequent common data analysis errors:

| Mistake | Description | Impact |

|---|---|---|

| Poor data quality | Incomplete, inconsistent, or inaccurate data | Skewed results |

| Ignoring missing data | Not properly addressing null values | Bias in analysis |

| Incorrect statistical methods | Wrong tests or models used | Invalid conclusions drawn |

| Overfitting models | Model too complex for data set | Poor generalization |

| Lack of data validation | Not checking data quality | Reduces the accuracy of research done |

| Misinterpretation of results | Drawn conclusions are incorrect | Wrong conclusions drawn |

| Confirmation bias | Favorable conclusions drawn about data | Results are skewed |

Detailed Breakdown of Common Mistakes in Quantitative Data Analysis

1. Poor Data Collection and Preparation

The biggest mistake in the research data analysis process occurs before the actual analysis takes place.

Common errors:

- Incomplete data sets

- Duplicate records

- Inconsistent formats

Expert approach:

- Thoroughly validate the data

- Make use of automated cleaning processes

2. Ignoring Missing or Outlier Data

Missing values and outliers can greatly impact the results if not considered.

Common mistakes:

- Deletion of data without proper understanding

- Failure to check for outliers

How experts avoid it:

- Imputation techniques

- Checking for outliers before deletion

- Statistical techniques for handling missing values

3. Using Incorrect Statistical Techniques

One of the greatest sources of statistical errors is choosing inappropriate methods for data analysis.

Examples:

- Using parametric tests for non-normal data

- Misusing correlation and causation

Expert strategy:

- Matching methods to data type and distribution

- Verifying assumptions before conducting tests

- Using software for precise calculations [3]

4. Overfitting or Underfitting Models

Errors in predictive analytics models are common.

Overfitting:

- The model learns noise rather than patterns

Underfitting:

- The model is too simple to learn trends

Expert solution:

- Use cross-validation

- Divide the data into two sets: training and test

- Optimize model complexity

5. Lack of Data Validation

Skipping this step will result in unreliable outcomes.

Common data analysis errors include:

- Not verifying data sources

- Ignoring inconsistencies

Expert practices:

- Implement validation rules

- Cross-check data with multiple sources

- Conduct consistency checks

Skipping this step will result in unreliable outcomes.

6. Misinterpreting Results

Even if correct analysis has been done, it can still fail if interpreted in a wrong manner.

Common problems:

- Confusion of correlation and causation

- Overgeneralization

How experts avoid mistakes:

- Use of domain knowledge

- Validation using multiple methods

- Clear definition of limitations

7. Confirmation Bias

Analysts can also unintentionally favor results that tend to support their expectations.

Impact:

- Reduced Objectivity

- Biased Insights [4]

Expert approach:

- Use blind analysis techniques

- Encourage peer review

- Test alternative hypotheses

Analysts can also unintentionally favor results that tend to support their expectations.

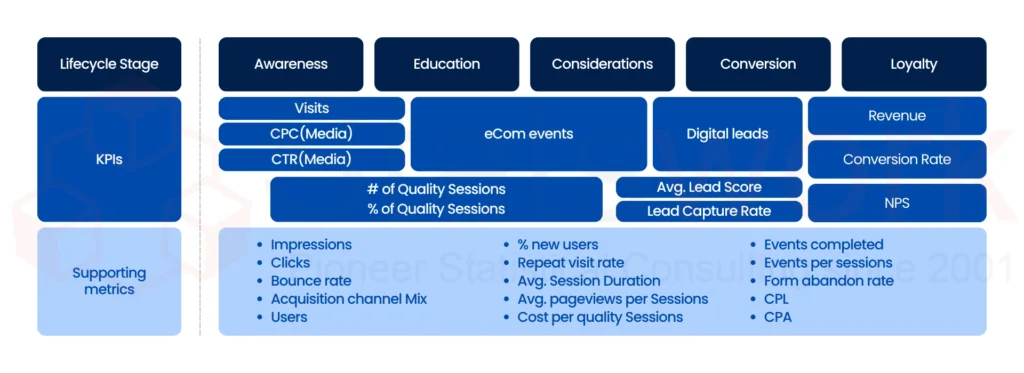

Figure 1: Key Data Analysis KPIs Across the Customer Lifecycle

Tips to Avoid Mistakes in Data Analysis

Here are some tested best practices to avoid mistakes that even experts follow:

Data Preparation

- Clean and prepare data

- Format and eliminate duplicate data

Statistical Accuracy

- Select appropriate statistical methods

- Validate assumptions before testing

Validation Techniques

- Develop effective data validation processes

- Utilize automated data validation tools

Model Optimization

- Utilize cross-validation

- Prevent overfitting using simpler models

Interpretation Best Practices

- Focus on context, not numbers

- Validate results with experts

Step-by-Step Approach Experts Follow

Step 1: Define Objectives Clearly

- Identify research objectives

- Avoid too broad research questions

Step 2: Collect High-Quality Data

- Use reliable data sources

- Ensure consistency

Step 3: Clean and Validate Data

- Validate data

- Handle missing values

Step 4: Apply Correct Analytical Methods

- Choose appropriate statistical methods

- Test assumptions

Step 5: Interpret Results Carefully

- Avoid bias

- Cross-check

Step 6: Review and Optimize

- Peer reviews

- Improve models and analysis

Tools Experts Use to Avoid Data Analysis Errors

| Tool Type | Examples | Purpose |

| Statistical software | R, SPSS, SAS | Accurate analysis |

| Data visualization | Tableau, Power BI | Better interpretation |

| Data cleaning tools | Open Refine, Python libraries | Remove inconsistencies |

| Validation tools | Excel validation, scripts | Ensure data accuracy [4] |

Challenges in Maintaining Research Accuracy

Even experts experience challenges such as:

- Large and complex datasets

- Time constraints

- Incomplete data

- Evolving methodologies

Overcoming these challenges entails learning, validation practices, and analytical frameworks.

Who Needs to Avoid These Mistakes?

This guide can be useful for:

- Researchers and academicians

- Data analysts and scientists

- Business intelligence professionals

- Students working on quantitative research

Conclusion

The first step towards improving the quality of research is to understand what common mistakes in data analysis are. From data handling to the application of statistical procedures, common data analysis mistakes can have a profound impact.

Yet, by employing expert strategies, such as data validation, the selection of proper analytical procedures, and the elimination of bias, you can improve the accuracy of research.

By learning how to apply expert research strategies, you can improve the quality of research, which can help you make confident decisions.

Avoid mistakes. Improve accuracy. Make better decisions.

Start your data analysis services journey with Statswork today.

Frequently Asked Questions (FAQs)

1. What are common mistakes in data analysis?

Common mistakes in data analysis include poor data quality, ignoring missing data, using incorrect statistical methods, lack of data validation, overfitting models, and misinterpreting results. These errors can reduce research accuracy and lead to misleading conclusions.

2. Why do errors occur in research data analysis?

Errors in research data analysis often occur due to inadequate data preparation, lack of proper statistical knowledge, poor data validation practices, and time constraints. Human bias and incorrect tool usage can also contribute to inaccuracies.

3. What are common mistakes in quantitative data analysis?

Common mistakes in quantitative data analysis include selecting inappropriate statistical tests, ignoring outliers, mishandling missing data, and overgeneralizing results. These mistakes can significantly impact the validity of research findings.

4. How do experts avoid data analysis mistakes?

Experts avoid data analysis mistakes by following structured processes such as proper data cleaning, applying suitable statistical techniques, conducting thorough data validation, and using cross-validation methods. Peer reviews and domain expertise also play a key role.

References:

- Brown, A. W., Kaiser, K. A., & Allison, D. B. (2018). Issues with data and analyses: Errors, underlying themes, and potential solutions. Proceedings of the National Academy of Sciences, 115(11), 2563-2570. https://www.pnas.org/doi/abs

- Turkiewicz, A., Luta, G., Hughes, H. V., & Ranstam, J. (2018). Statistical mistakes and how to avoid them–lessons learned from the reproducibility crisis. Osteoarthritis and Cartilage, 26(11), 1409-1411. https://www.oarsijournal.com

- A., Luta, G., Hughes, H. V., & Ranstam, J. (2018). Statistical mistakes and how to avoid them–lessons learned from the reproducibility crisis. Osteoarthritis and Cartilage, 26(11), 1409-1411. https://www.oarsijournal.com

- Chaimani, A., Salanti, G., Leucht, S., Geddes, J. R., & Cipriani, A. (2017). Common pitfalls and mistakes in the set-up, analysis and interpretation of results in network meta-analysis: what clinicians should look for in a published article. Evidence Based Mental Health, 20(3). https://mentalhealth.bmj.com

- Lin, H., Lisnic, M., Akbaba, D., Meyer, M., & Lex, A. (2025). Here’s What You Need to Know about My Data: Exploring Expert Knowledge’s Role in Data Analysis. IEEE Transactions on Visualization and Computer Graphics. https://ieeexplore.ieee.org