What is Data Validation and Cleaning?

- Home

- Insights

- Article

- What is Data Validation and Cleaning?

Qualitative Research Service

News & Trends

Recommended Reads

Data Collection

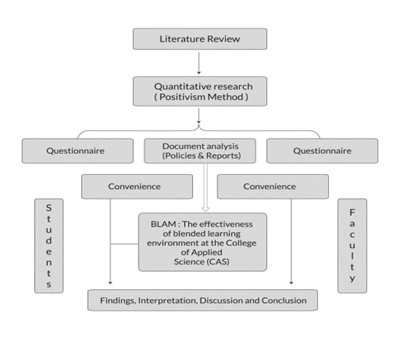

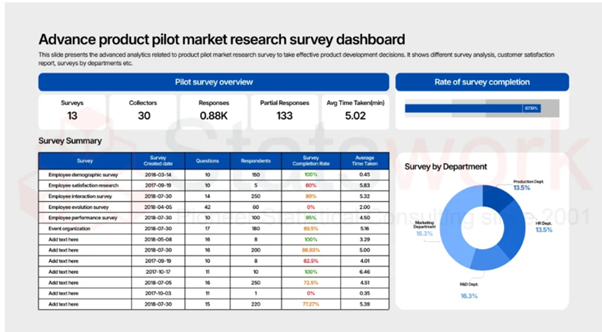

As the data collection methods have extreme influence over the validity of the research outcomes, it is considered as the crucial aspect of the studies

- 1. Introduction

- 2. DeepHealth’s Diagnostic Suite™: Revolutionizing Radiology Workflows

- 3. Key Features

- 4. AI Impact on National Screening Programs

- 5. SmartMammo™: Enhancing Breast Cancer Screening

- 6. DeepHealth AI Use Cases Across Specialties

- 7. Strategic Collaborations and Ecosystem Expansion

- 8. Impact and Adoption of DeepHealth’s AI Solutions

- 9. Conclusion: The Future of Radiology with AI

- 10. References

Data Validation

Data validation refers to the process of ensuring data collected is following certain rules, formats, and constraints before any data processing or analysis takes place. The main reason it is done is to avoid any data that is incomplete, inaccurate, or illogical from entering the data set. Data validation is the first line of defence when it comes to data quality.[2]

Principles of Effective Data Validation

Effective data validation involves ensuring the accuracy, reliability, and suitability of the data for analysis through systematic quality checks at all stages of data handling.

- Accuracy checks: Validating data values for their logical correctness, for instance, checking ages or quantities.

- Format validation: Verifying that data conforms to a required structure, like standard date or text formats.

- Range checks: Verifying if the values are within the set limits, such as between 0 and 100.

- Consistency checks: Assuring that all related fields do not contradict each other, perhaps a start date prior to an end date.

- Completeness checks: Identifying the missing or null values which may cause the quality of the data to decrease

Data validation is typically used when entering or collecting data or when importing because it acts as a preventive measure to avoid data errors.[3]

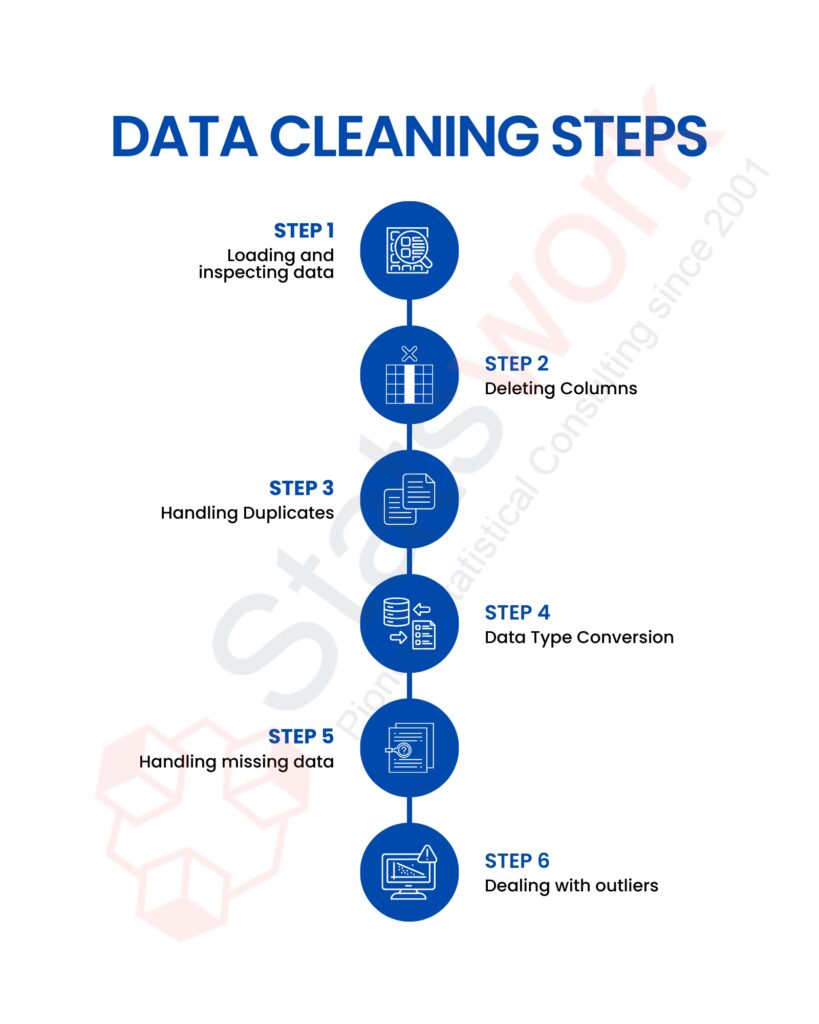

Data cleaning

Data cleaning or sometimes referred to as data cleansing is the process of trying to find and remove or repair the errors found within an existing set of data. While validation is about trying to prevent something from going wrong, data cleaning is about trying to clean up what already exists.[4]

Essential Processes in Data Cleaning

Data cleaning is a process of systematic activities that are carried out with the aim of enhancing the quality of data by eliminating errors, inconsistencies, and redundancies.

- Handling missing data: Replace missing values by applying appropriate methods or delete the records that contain missing values.

- Removing duplicates: Identify duplicate records to avoid bias or over-representation in the dataset.

- Correcting errors: Correct spellings of names incorrectly spelled, wrong nomenclatures or labels, and incorrect entries.

- Standardizing data: Convert all values to the same standard format within the complete dataset.

- Outlier treatment: Identify anomalous values and determine whether they are to be corrected or retained, depending on the context.

Data cleaning should enhance data accuracy, consistency, and usability.[4]

Difference Between Data Validation and Data Cleaning

|

Data Validation |

Data Cleaning |

|

Prevents wrong or invalid data from entering the system. |

Clears errors pre-existing in the data. |

|

Done before or during data entry or importing. |

Done after data collection is completed. |

|

Uses pre-defined rules to check on accuracy and format. |

Cleanses the data by correcting mistakes and inconsistencies. |

|

Data is accepted or rejected according to the rules. |

Outputs of a clean and reliable dataset.[5] |

In Summary, for data to be reliable and analytical errors to be reduced, data validation and cleaning are essential. When data is inaccurate or of poor quality, inaccurate conclusions are drawn, which results in poor-quality decisions. Data validation/cleaning is the foundation of trustworthy data analysis, as these processes provide confidence in the results and ensure the accuracy and consistency of the data.

Clean data, confident decisions — let StatsWork expertly validate, refine, and perfect your data today.

Reference

- Mendenhall, D. W. (1989). Cleaning validation. Drug Development and Industrial Pharmacy, 15(13), 2105-2114. https://www.tandfonline.com/doi/pdf/10.3109/03639048909052522

- Gao, J., Xie, C., & Tao, C. (2016, March). Big data validation and quality assurance–issuses, challenges, and needs. In 2016 IEEE symposium on service-oriented system engineering (SOSE)(pp. 433-441). IEEE. https://ieeexplore.ieee.org/abstract/document/7473058/

- Di Zio, M., Fursova, N., Gelsema, T., Gießing, S., Guarnera, U., Petrauskienė, J., … & Walsdorfer, K. (2016). Methodology for data validation 1.0. Essnet Validat Foundation. https://www.researchgate.net/profile/J-Tarafdar/post/How_can_i_validate_my_results/attachment/5aeab43c4cde260d15dc6236/AS%3A622097474793479%401525331004677/download/methodology_for_data_validation_v1.0_rev-2016-06_final.pdf

- Chu, X., Ilyas, I. F., Krishnan, S., & Wang, J. (2016, June). Data cleaning: Overview and emerging challenges. In Proceedings of the 2016 international conference on management of data(pp. 2201-2206). https://dl.acm.org/doi/abs/10.1145/2882903.2912574

- Pomerantseva, V., & Ilicheva, O. (2011). Clinical data collection, cleaning and verification in anticipation of database lock: practices and recommendations. Pharmaceutical Medicine, 25(4), 223-233. https://link.springer.com/article/10.1007/BF03256864