- Services

- Data Analysis services

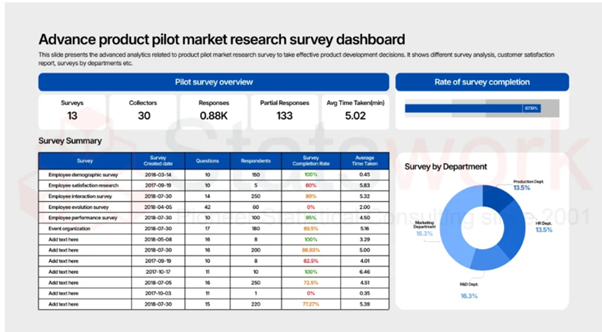

- Sample Work

Data Analysis services

- Secondary Qualitative Research Services

- Secondary Quantitative Research Services

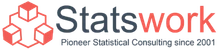

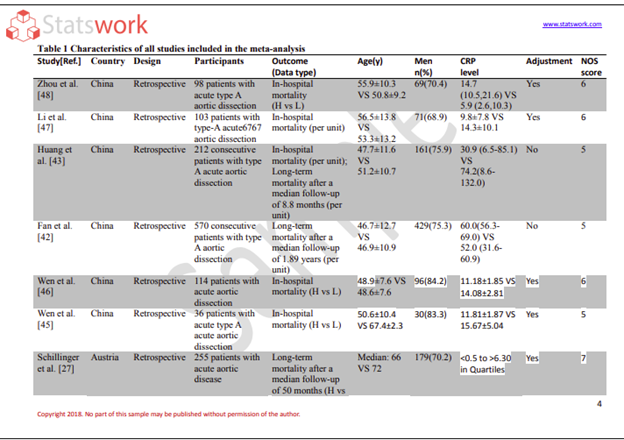

- Meta-Analysis Research services

- Sample Work

Meta-Analysis Research Services

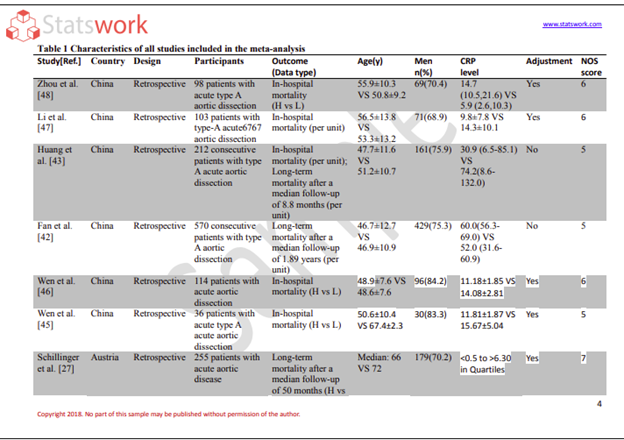

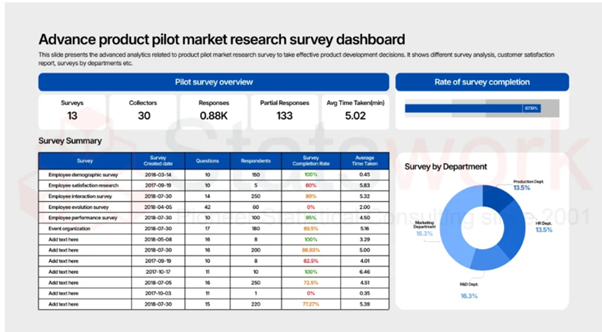

- Data Collection Services

- Sample Work

Data Collection Services

- Statistical & Biostatistics services

- Sample Work

Statistical Programming & Biostatistics services

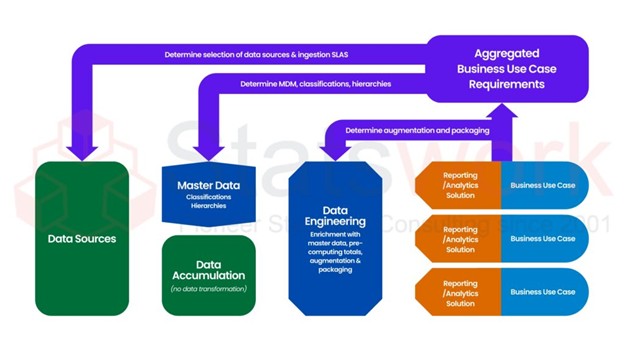

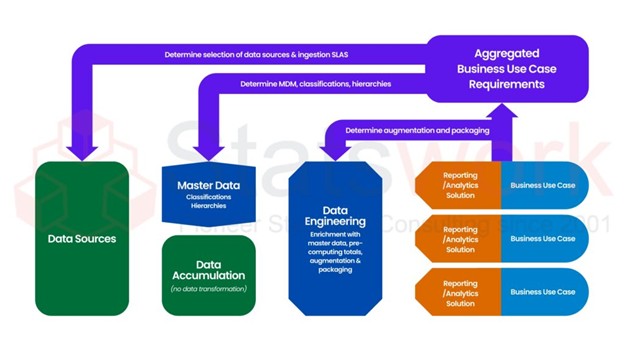

- Data Management Services

- Sample Work

Data Management Services

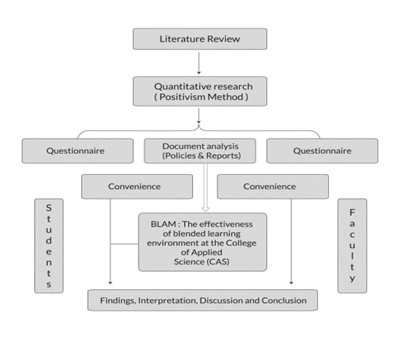

- Research methodology services

- Sample Work

Research methodology services

- Tool Development Services

- Sample Work

Tool development services

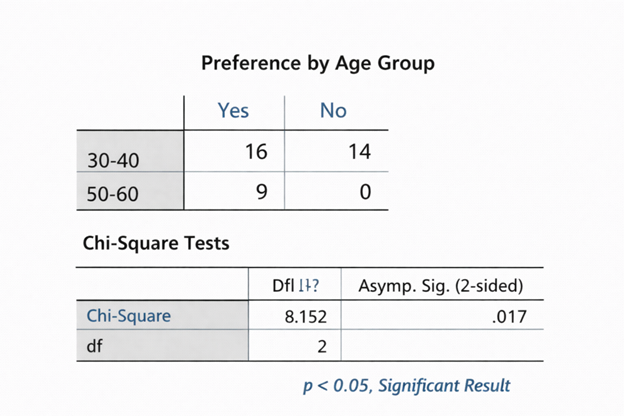

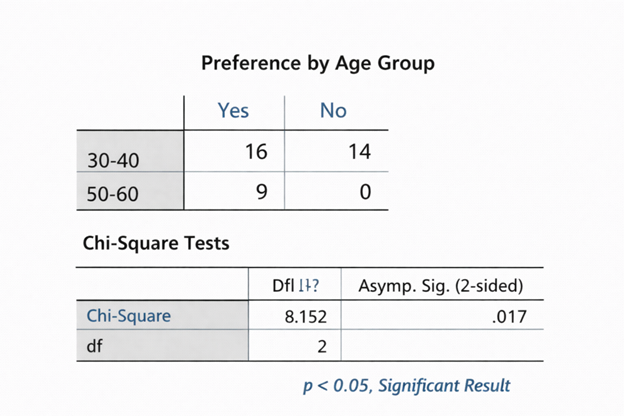

- Statistical Interpretation services

-

Statistical Interpretation services

- Sample Work

Statistical Interpretation services

-

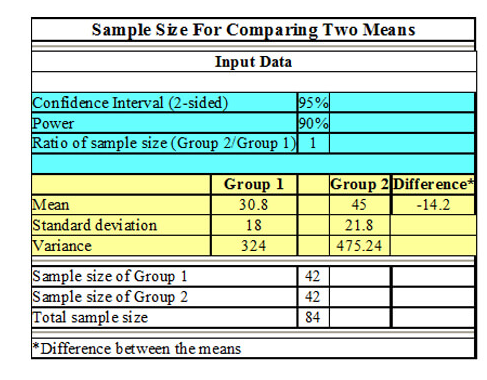

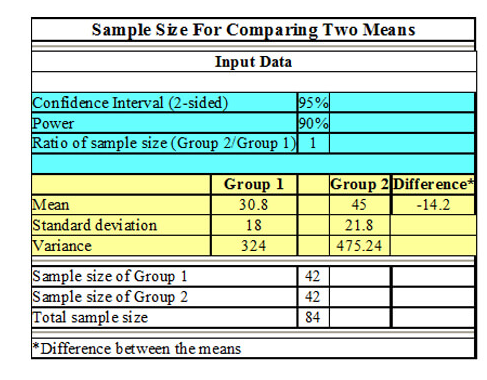

- Sample Size Calculation Services

-

Sample Size Calculation Services

- Sample Work

Sample Size Calculation Services

-

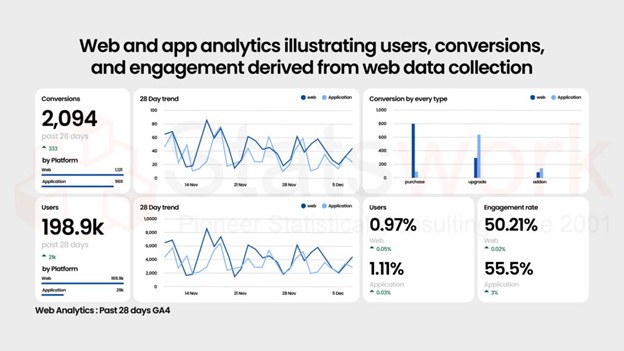

- AI & ML Services

-

Artificial Intelligence and Machine Learning Services

- Sample Work

Artificial Intelligence and Machine Learning Services

-

- Meaningful Visualization Services

- Thought Leadership Services

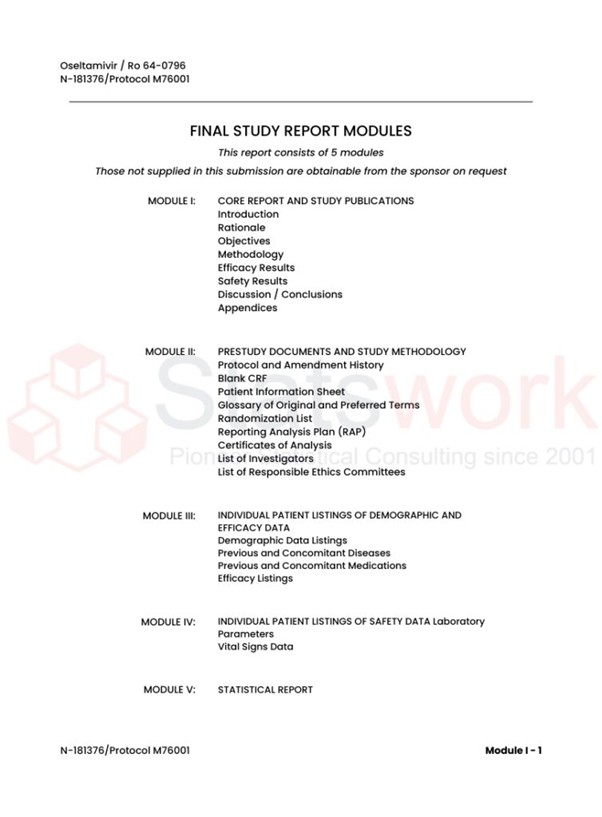

- Report Generation Services

-

Report generation Service

- Sample Work

Report generation Services

-

- Data Analysis services

- Industries

- About Us

- Blog

- Insights

- Contact Us

- Services

- Data Analysis services

- Sample Work

Data Analysis services

- Secondary Qualitative Research Services

- Secondary Quantitative Research Services

- Meta-Analysis Research services

- Sample Work

Meta-Analysis Research Services

- Data Collection Services

- Sample Work

Data Collection Services

- Statistical & Biostatistics services

- Sample Work

Statistical Programming & Biostatistics services

- Data Management Services

- Sample Work

Data Management Services

- Research methodology services

- Sample Work

Research methodology services

- Tool Development Services

- Sample Work

Tool development services

- Statistical Interpretation services

-

Statistical Interpretation services

- Sample Work

Statistical Interpretation services

-

- Sample Size Calculation Services

-

Sample Size Calculation Services

- Sample Work

Sample Size Calculation Services

-

- AI & ML Services

-

Artificial Intelligence and Machine Learning Services

- Sample Work

Artificial Intelligence and Machine Learning Services

-

- Meaningful Visualization Services

- Thought Leadership Services

- Report Generation Services

-

Report generation Service

- Sample Work

Report generation Services

-

- Data Analysis services

- Industries

- About Us

- Blog

- Insights

- Contact Us

How to Validate and Clean Data for Accurate Business Insights

- Home

- Insights

- Article

- How to Validate and Clean Data for Accurate Business Insights

AI and Machine Learning Success

- Why Data Validation and Cleaning Matter

- Common Data Issues That Need Fixing

- How to Validate and Clean Your Data

- Profile Your Data

- Set Business Rules and Validation Criteria

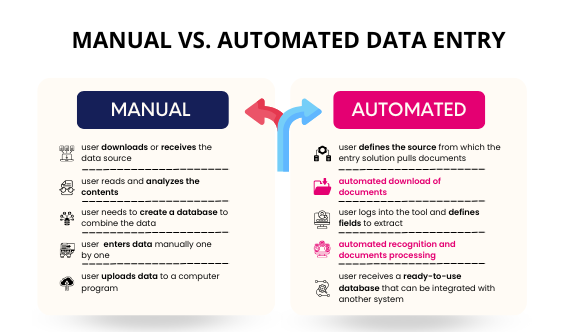

- Use Automated Tools for Data Cleaning

- work for you identifying and correcting

- Merge and Deduplicate

- Verify Against External Trusted Sources

- Conduct Human-in-the-Loop Review

- Audit and Monitor Data Quality Over Time

News & Trends

Recommended Reads

Data Collection

As the data collection methods have extreme influence over the validity of the research outcomes, it is considered as the crucial aspect of the studies

How to Validate and Clean Data for Accurate Business Insights

Table of Content

- 1. Why Data Validation and Cleaning Matter

- 2. Common Data Issues That Need Fixing

- 3. How to Validate and Clean Your Data

- 4. Profile Your Data

- 5. Set Business Rules and Validation Criteria

- 6. Use Automated Tools for Data Cleaning

- 7. work for you identifying and correcting

- 8. Merge and Deduplicate

- 9. Verify Against External Trusted Sources

- 10. Conduct Human-in-the-Loop Review

- 11. Audit and Monitor Data Quality Over Time

- 12. Conclusion

- 13. References

May 2025 | Source: News-Medical

Introduction

In today’s data-driven economy, businesses thrive on their ability to turn raw data into strategic insights. However, data in its raw form often contains errors, inconsistencies, duplicates, or outdated records—making it unreliable for critical decision-making. This is why data validation and data cleaning are indispensable processes in modern analytics pipelines.

From healthcare and finance to telecom and retail, organizations that prioritize clean data and data quality gain a significant competitive edge. This article explores how to validate and clean data effectively for reliable, actionable business insights.[1]

Why Data Validation and Cleaning Matter

- Inaccurate reports and forecasts

- Regulatory noncompliance

- Unnecessary spending/operational productivity

- Poor customer experience

- Missed business opportunities

Common Data Issues That Need Fixing

- Duplicates

- Null or missing values

- Old, stale records

- Incorrect formats (date, currency, etc.)

- Inconsistent naming (e.g., “WA”, “Washington” or “John Doe” and “Doe, John”)

- Typing or human error (these can be eminent if most of your records are loaded manually).

Step-by-Step Guide: How to Validate and Clean Your Data

1. Profile Your Data

- Identify anomalies

- Determine completeness

- Understand how values are distributed

- Identify unacceptable formats [4]

2. Set Business Rules and Validation Criteria

- Names can only be alphabetic characters

- Phone numbers must be a region’s valid ‘format’

- Dates must be in the format YYYY-MM-DD

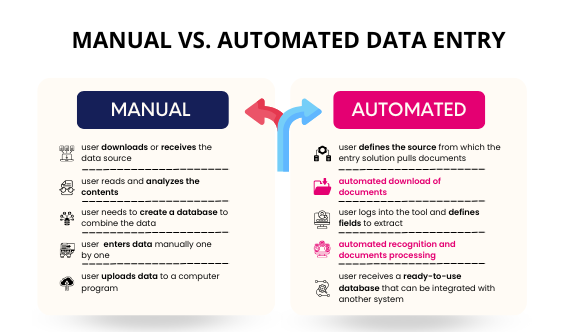

3. Use Automated Tools for Data Cleaning

- Open Refine – Excellent for identifying duplicates and normalizing inconsistent data

- Data Cleaner – For rule-based validation and profiling

- Microsoft Power Query – Best for cleaning and transforming data from Excel or Power BI

- Ataccama ONE – An AI-assisted platform for enterprise data quality and governance [5]

4. These tools do much of the work for you identifying and correcting:

- Missing fields

- Duplicated values

- Incorrect data types4. Scrub and Standardize the Data

- Converting all date fields to a standard format

- Ensuring country codes are uniform

- Normalizing text cases (e.g., all names in title case)

5. Merge and Deduplicate

Data merging is the process of combining records and consolidating content from different sources into a single, consistent form, while removing duplicates. Merging records into a single entry is important for CRM databases, customer records, or product databases. [6]

Deduplication tools allow the user to pull related records that are fuzzy matched by algorithms to prevent duplicates of customer profiles, or vendors.

6. Verify Against External Trusted Sources

Data verification ensures you have a match with a reliable external source (eg. government registry, financial institution). This gives you some additional confidence in your validated data

7. Conduct Human-in-the-Loop Review

Though automation can help improve efficiency, human oversight is needed for complex or sensitive datasets – particularly in healthcare, BFSI and another academic research. In the high-stakes domains that Statswork designs and implements human-in-the-loop QA, we do it to keep data in compliance with regulations and ethical standards.[5]

8. Audit and Monitor Data Quality Over Time

- Tracking data quality metrics

- Identifying repeat issues

- Guaranteeing continued compliance

Statswork has observed significant enhancements in sectors through our data cleansing services:

- Healthcare and Clinical Research: Improving trial data integrity by removing duplicate patient records.

- Finance and Banking: Strengthening regulatory compliance and lowering chances of fraud.

- Retail and eCommerce: Better segmentation of customers and more accurate inventory accounts.

- Education and Academia: Clean and reliable survey data for valid statistical analysis.

Outsourcing Data Validation and Cleaning: Why It Makes Sense

In-house data cleaning requires resources or competencies that many organizations are lacking. When using specialists like Statswork, you gain:

- Less internal effort

- Experienced data processors & validation specialists

- Fast turnaround time

- Scalable options based on your area

Conclusion: Clean Data is Smart Data

Regardless of your sector, reliable and validated data is foundational for maintaining successful operations and informed decision-making. By utilizing the right tools, techniques, and quality checks, you can ensure your data tells the truth—and that it is telling the truth in a clear way. With Statswork’s data validation and cleaning services, not only do you receive sound data for your organization, but also another layer of competitive advantage.

References

- Guo, M., Wang, Y., Yang, Q., Li, R., Zhao, Y., Li, C., Zhu, M., Cui, Y., Jiang, X., Sheng, S., Li, Q., & Gao, R. (2023). Normal Workflow and Key Strategies for Data Cleaning Toward Real-World Data: Viewpoint. Interactive journal of medical research, 12, e44310. https://pmc.ncbi.nlm.nih.gov/articles/PMC10557005/

- Van den Broeck, J., Cunningham, S. A., Eeckels, R., & Herbst, K. (2005). Data cleaning: detecting, diagnosing, and editing data abnormalities. PLoS medicine, 2(10), e267. https://pmc.ncbi.nlm.nih.gov/articles/PMC1198040/

- Pilowsky, J. K., Elliott, R., & Roche, M. A. (2024). Data cleaning for clinician researchers: Application and explanation of a data-quality framework. Australian critical care: official journal of the Confederation of Australian Critical Care Nurses, 37(5), 827–833. https://pubmed.ncbi.nlm.nih.gov/38600009/

- Love, S. B., Yorke-Edwards, V., Diaz-Montana, C., Murray, M. L., Masters, L., Gabriel, M., Joffe, N., & Sydes, M. R. (2021). Making a distinction between data cleaning and central monitoring in clinical trials. Clinical trials (London, England), 18(3), 386–388. https://pmc.ncbi.nlm.nih.gov/articles/PMC8174009/

- Amir Masoud Sharifnia, Daniel Edem Kpormegbey, Deependra Kaji Thapa, Michelle Cleary First published: 27 March 2025https://doi.org/10.1111/jan.16908

- Dhudasia, M. B., Grundmeier, R. W., & Mukhopadhyay, S. (2023). Essentials of data management: an overview. Pediatric

research, 93(1),https://pmc.ncbi.nlm.nih.gov/articles/PMC8371066/